Eugene Quinn for East Greenwich Schools

Student Growth Percentile (SGP) Research

In 2015 I conducted a summer research project that investigated the operating characteristics of the SGP measure

When the subject of teacher evaluation systems came up at a town council meeting some years ago, the speaker (the late David Abbott) emphasized the complexity of these systems.

Over the last 20 years, there was a great deal of interest in developing teacher evaluation systems that use a class of statistical models based on test scores known as "Value-Added Methods" or VAM.

Like many in the statistical community I viewed claims of the capabilities of these models with skepticism, but VAM had so much momentum at that point that it was unstoppable. However, as more experience was accumulated, the shortcomings of VAM became apparent. In 2014, the Americal Statistical Association issued a cautionary statement on VAM.

While not a VAM measure, the Student Growth Percentile (SGP), also known as the Colorado Growth Model (or even the Rhode Island Growth Model), experienced a similar trajectory, going quickly from a pilot program to automation and implementation by more than 20 states as one of the pimary measures of a student's educational progress.

The rapid adoption of SGP was partly a result of the requirements of the "Race to the Top" program, and partly because the developers released the SGP software as a freely available open source package in the R Statistical System.

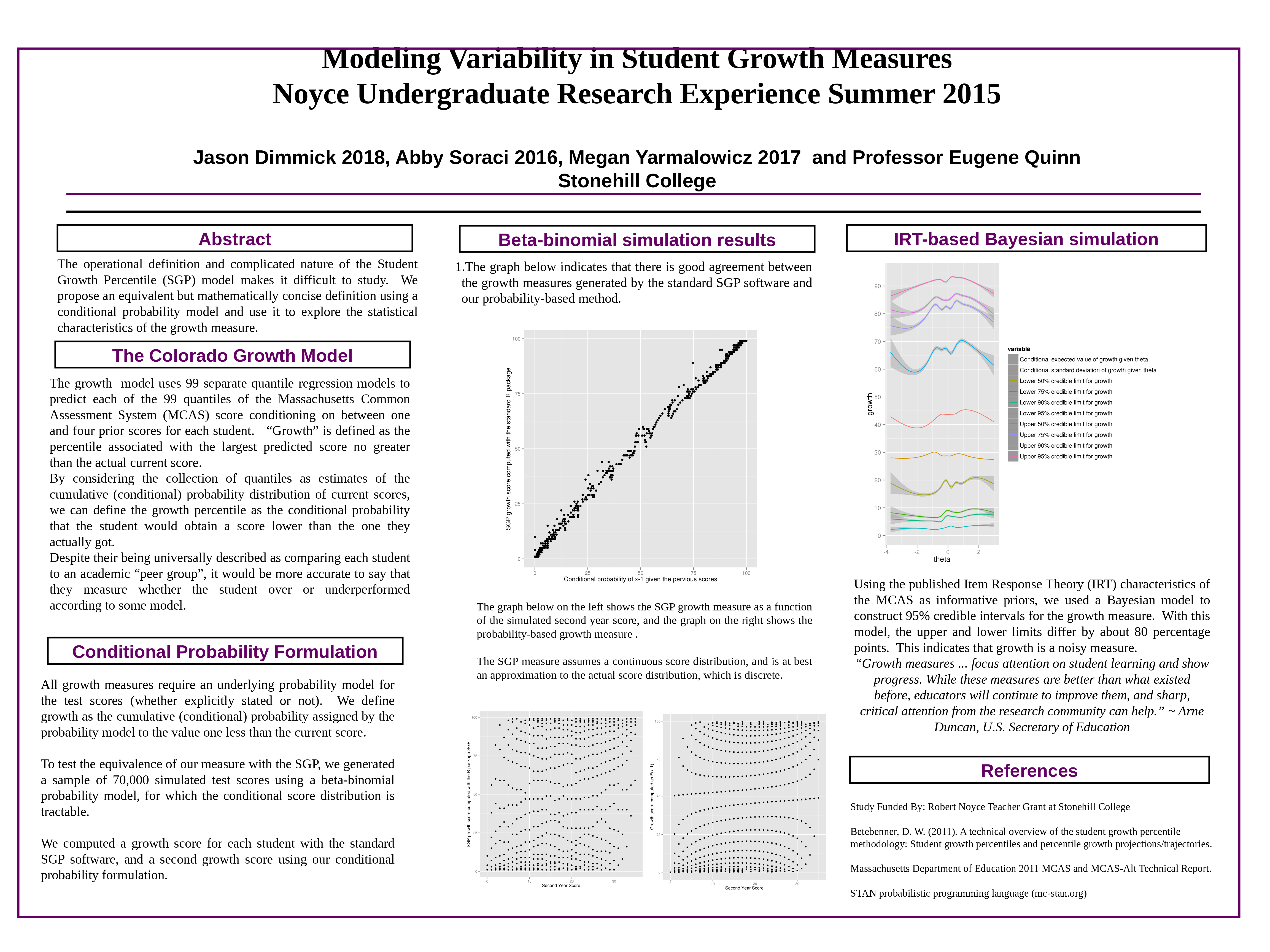

In the summer of 2015 I did a research project with three students working towards teacher certification to investigate the operational characteristics of the SGP measure.

The high degree of complexity of the SGP was a challenge to model. The SGP uses a technique called quantile regression that, in theory, predicts quantiles rather than expected values. The SGP model does ninety-nine of these for each student, to predict the first percentile, the second percentile, etc., up to the 99th percentile based on their past MCAS scores. Then it uses cubic splines to smooth the results. The percentile the model predicts for the actual MCAS score for this student is reported as 'growth'.

I know this sounds different from what the official documentation says, but the actual process is so technical and so complicated there is no way to explain the mechanics to a lay audience.

We developed a simpler model of the SGP measure as a conditional probability. If you assume that the quantile regressions produce the correct percentiles (a bit of a stretch because the technique assumes a continuous underlying measure), you can express the SGP score as the conditional probability of getting an MCAS score lower than their actual score given the student's previous MCAS scores.

Then we used the Item Response Theory (IRT) parameters of the Massachusetts Common Assessment System to simulate three years of MCAS results from 70,000 students (the size of a Massachusetts grade cohort).

From the simulated MCAS test scores, we generated SGP scores for each "student" using the actual SGP software, with a slight modification to display more of the internal computations.

Finally, we did a Bayesian statistical analysis of the SGP scores.

We found good agreement between our definition and the simulated data, and were able to produce interval estimates for the SGP scores indicating that the SGP is a noisy measure, with a 95% credible interval 80 percentage points wide.

At the 2014 American Statistical Association Joint Meetings, I spoke with Dan McCaffrey of ETS, who has done extensive work on VAM. He told me that every independent researcher who has investigated the precision of the SGP has concluded that, for an individual student score, the 95% confidence interval is 0 to 100.

2016 New England Statistics Symposium Poster Presentation

I presented our results in this poster at the New England Statistics Symposium at Yale University.